Responsive sites are here and have been for a while now. I think they’re great but I cannot help feeling that a lot of people are missing an important point.

It’s a responsive site, but it’s not responding!

After years in the making you’re finally ready to show your brand new site off to the world. You happily smile and take the compliments and proudly say its responsive. You talk about all the hours spent on the design, on prototyping ideas, on user testing and on getting the content just right. But something is not quite right, the site feels sluggish and slow. That’s because an important step was rushed. The build.

It seems to me that many site owners are seeing the build as a barrier to getting a website operational. “When will it be ready” seems to be a question I hear a lot. It’s a fair and important question but one that is already saying to me, hurry up please.

We should be educating our clients as to the importance of page performance and discussing ways in which we might load imagery and other static files, providing options for implementing a CDN and processes for compressing files. We mustn’t let these things be pushed into “phase 2” development in an attempt to get to an earlier release date.

Just this week Halifax has updated its website and made it “responsive”. They even provide a definition for what responsive means:

It means that no matter what device you’re using to visit halifax.co.uk, the site will adapt to fit to your screen. You can see and interact with the same content, from mobile phones to home computer screens.

Halifax

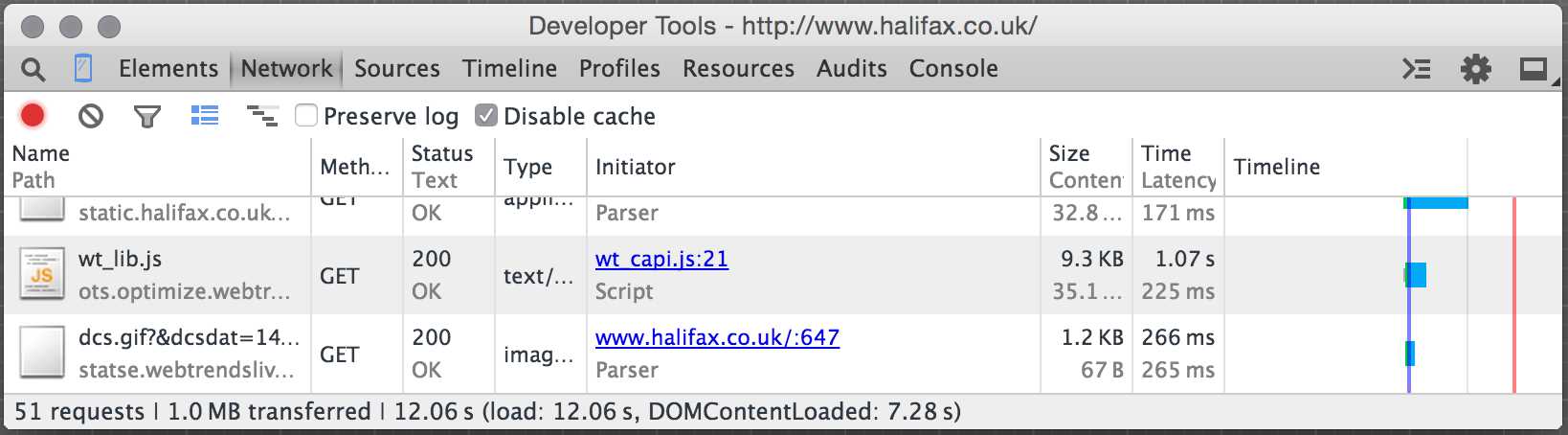

Well, ok. But let’s look at the load time over a 3G network for the new site.

This diagram shows that content doesn’t appear for over seven seconds, which is way too long. Surely you cannot claim that a site is responsive if it takes this long to load on a mobile device?

So what could be done to improve Halifax’s site?

Google PageSpeed Insights is quite a helpful starting place. The ranking can be sometimes seem quite brutal for seemingly “little” things but it can give you a feel for where you are at (36/100 for mobile speaks volumes for Halifax).

I would recommend that Halifax focuses on the following three major issues and potential fixes to help improve site performance.

Enable compression

Enabling compression for the Halifax homepage could save 716 KiB, or put another way, 83% of the respective files. But what does this achieve? Surely compressing content on the server to uncompress it on the client side will just add extra strain? Yes, it does increase CPU time but it saves more time than it uses.

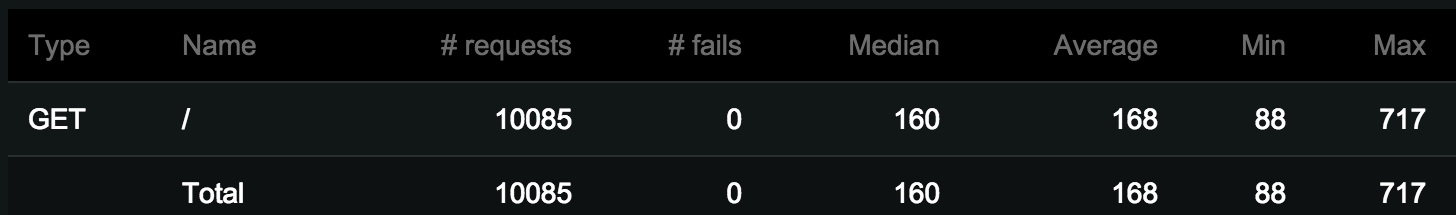

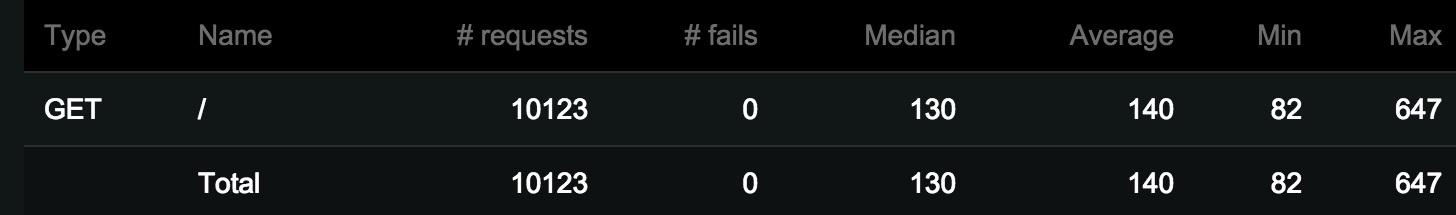

To demonstrate this, I conducted a small experiment that had just over 10,000 requests (50 active users requesting the site every second) to compressed and uncompressed versions of a homepage. The first graphic shows response times for an uncompressed version of the page. The second graphic shows the same test with a compressed version. As you can see there is a significant saving of 17%

Eliminate render-blocking JavaScript and CSS in above-the-fold content

There are nine scripts files and eight CSS files blocking the page from loading. This is almost definitely what is causing the page to take over seven seconds to start showing content. It is a bit harder to solve given the page can have dependencies on these files and not loading them in the correct order can also cause issues.

Regardless, it is a big issue that needs careful thought. However, in general, putting all your javascript files at the bottom of the page can stop render-blocking. You could even get a little more fancy and load non-essential javascript files in via AJAX call. Taking this even further, since a website should work without any javascript files, then you could AJAX all your files.

Leverage browser caching

You can leverage browser caching by setting a few lines in a .htaccess file or web.config file. Not doing so makes no sense. Images, CSS, fonts and Javascript (and more) would hardly ever change so why not inform the browser to use the last file you sent it? When the time does come for changes then you can rename the file or append a version number to the query string.

Is it really worth it?

You bet it is! If you really want a site to be responsive then you need to tackle all the issues and site performance is definitely one of them. It’s great to see people are doing it and there is an excellent case study from Smashing Magazine that shows how they achieved it.